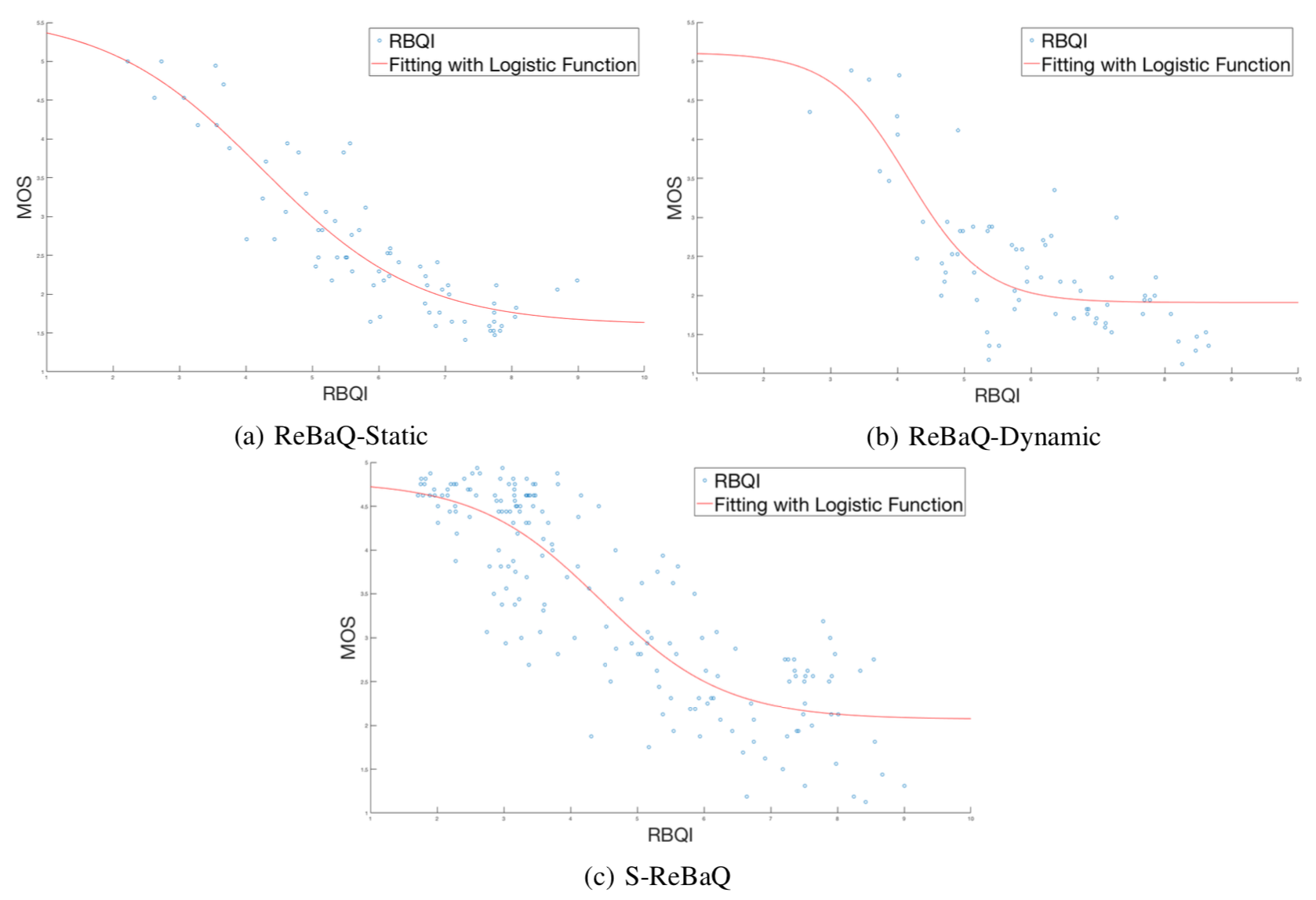

Full Reference Objective Quality Assessment for Reconstructed Background ImagesWith an increased interest in applications that require a clean background image, such as video surveillance, object tracking, street view imaging and location-based services on web-based maps, multiple algorithms have been developed to reconstruct a background image from cluttered scenes. Traditionally, statistical measures and existing image quality techniques have been applied for evaluating the quality of the reconstructed background images. Though these quality assessment methods have been widely used in the past, their performance in evaluating the perceived quality of the reconstructed background image has not been verified. In this work, we discuss the shortcomings in existing metrics and propose a full reference Reconstructed Background image Quality Index (RBQI) that combines color and structural information at multiple scales using a probability summation model to predict the perceived quality in the reconstructed background image given a reference image. To compare the performance of the proposed quality index with existing image quality assessment measures, we construct two different datasets consisting of reconstructed background images and corresponding subjective scores. The quality assessment measures are evaluated by correlating their objective scores with human subjective ratings. The correlation results show that the proposed RBQI outperforms all the existing approaches. Additionally, the constructed datasets and the corresponding subjective scores provide a benchmark to evaluate the performance of future metrics that are developed to evaluate the perceived quality of reconstructed background images. |

|

|

|

|

|

|

|

|

|

|

|

|

|

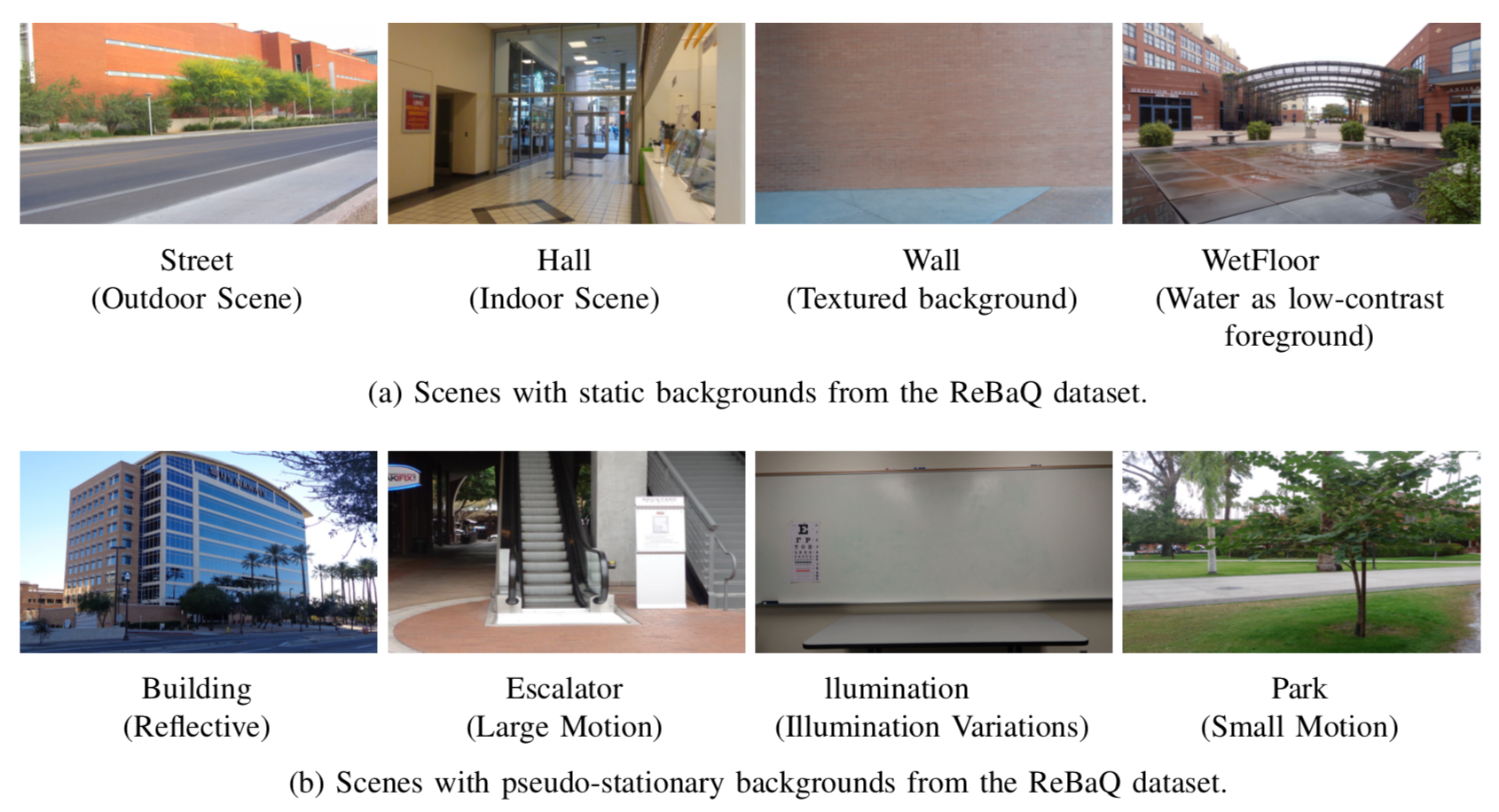

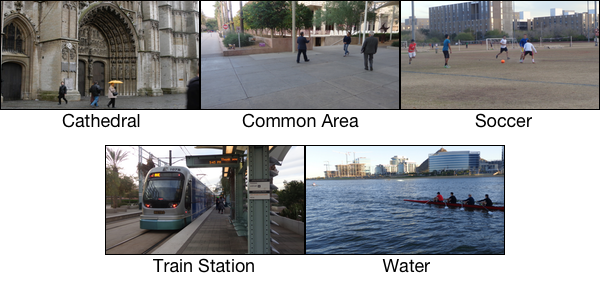

Visual quality assessment of reconstructed background imagesA clean background image is of great importance in multiple applications such as video surveillance, object tracking and context-based video encoding, but acquiring a clean background image in public areas is seldom possible. Many algorithms have been developed to initialize the background from videos and images. This research presents a database consisting of 13 different scenes that can be used for benchmarking the performance of background initialization algorithms. We also conducted a subjective study on the perceptual quality of background images that are reconstructed using existing background initialization algorithms. The obtained subjective scores were used to evaluate existing image quality metrics and their capability in predicting the perceived quality of reconstructed background images. |

|

|

|

|

|

a) Sample captured reference background images for different scenes. Each reference background image corresponds to a captured scene background without foreground objects.

|

b) Sample frame for scenes with no reference background images (no captured reference background available).

|

|

|

|

|

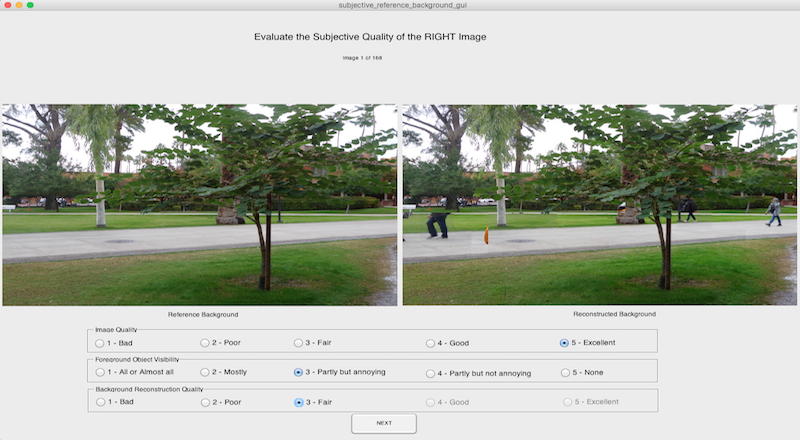

a) For images with reference background.

|

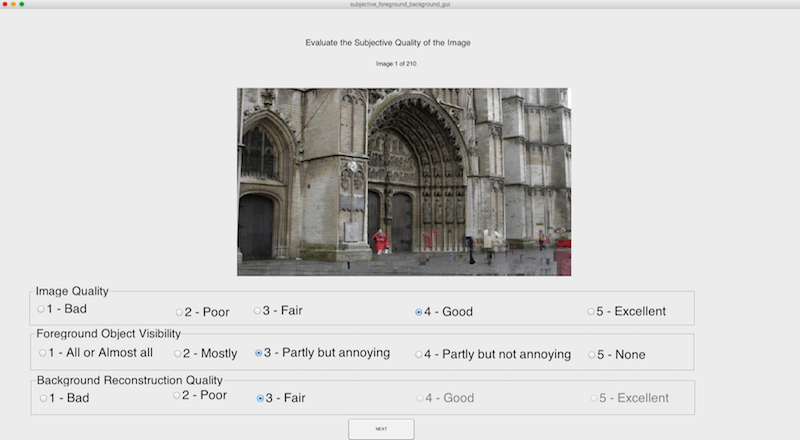

b) For images with no reference background.

|

|

|

|

Background recovery from multiple imagesIn this research, we propose an algorithm to extract the background by removing unwanted objects from multiple images of a scene with varying illumination conditions captured by a stationary camera. The variations in illumination from scene to scene are due to the possible presence of different illumination sources and different foreground objects causing different shadows and reflections in each scene. While this causes the background to be non-stationary when considering pixel intensities, the algorithm exploits the fact that the background is static while the foreground is non-stationary in a given feature space. The extracted foreground regions are treated as holes and are filled from one of the available images where the background at the corresponding position is un-occluded. The background pixels for filling the holes are selected based on a cost function that attempts to maximize the naturalness and perceived quality of the reconstructed background. |

|

|

|

|

|

|

|

|

|

|